We’re live in Las Vegas for AWS re:Invent 2023, the technology giant’s annual flagship event.

We’re expecting a host of new announcements, upgrades and releases this week, all sprinkled with a hefty dose of AI, no doubt.

The event kicks off this morning with an opening keynote from AWS CEO Adam Selipsky, with further speeches from Swami Sivasubramanian, Vice President of AWS Data and Dr. Werner Vogels, Vice President and CTO, AWS to come later in the week.

Happy re:Invent 2023! After arriving last night and getting through a jet-lagged day, we’re ready for one more night’s sleep before the main event kicks off tomorrow.

That’s not to say AWS has been cruising into the event though – as today it announced Amazon WorkSpaces Thin Client, a low-cost enterprise focused device that should help businesses everywhere.

Welcome to the official day one of AWS re:Invent 2023! We’re (relatively) well-rested and about to get caffeinated, so are nearly ready for the big kick-off shortly.

This morning kicks off with a keynote from AWS CEO Adam Selipsky, who will no doubt be unveiling a host of new products and services, as well as bringing customers and other friends on stage.

The keynote begins at 08:00am PT, so there’s just over an hour to go.

In true Vegas tech conference fashion, it’s 07.35am and we’re being assaulted by full-throttle 80’s rock covers (although the band are pretty decent)

Mr Brightside anyone?

Now we have a Back in Black vs Wonderwall mash-up…if this wasn’t Vegas, I’d be confused.

20 minutes until the keynote….

With a few minutes to go, it’s a packed out keynote theatre full of AWS fans!

The band is closing out with “My Hero” by Foo Fighters – requested by Adam Selipsky himself apparently!

And with that, the band is done, and it’s keynote time!

AWS CEO Adam Selipsky takes to the stage to rapturous applause, welcoming us to the 12th re:Invent event – there’s apparently 50,000 people here this week.

Selipsky starts with a run-down of the customers AWS is working with, from financial to healthcare, manufacturing and entertainment.

Salesforce gets a special mention, with Selipsky highlighting the newly-announced partnership between the two companies. Salesforce is expanding its “already large use” of AWS, he notes, with Bedrock and Einstein working together to help developers build generative AI apps faster.

Salesforce is also putting its apps on the AWS marketplace.

But start-ups are also choosing AWS, Selipsky notes, with over 80% of unicorns running on the company’s platform – from genomics mapping to guitar-making.

“Reinventing is in our DNA,” Selipsky notes, adding that’s how cloud computing as a whole came about.

This has evolved to making sure companies of all sizes have access to the same technology, no matter who they are.

AWS now extends to 32 regions around the world – no other cloud provider offers that, Selipsky notes.

This extends to multiple AZ’s in each region, meaning regions can remain in operation, even in case of emergency or outages.

“Others would have you believe cloud is all the same – that’s not true,” he notes.

AWS offers three times the amount of data centers compared to the next closest cloud provider, 60% more services, and 40% more features – that’s what helps it stand apart from the competitor, Selipsky says.

Selipsky runs through some of the history of Amazon storage, looking back at how Amazon S3 has evolved.

Now, it’s time for the next step forward in this journey, he says – Amazon S3 Express One Zone.

Designed for your most-accessed data, it supports millions of requests per minute – and is up to 10x fastser than the existing S3 storage. It looks like a huge step forward for users everywhere.

Now, we move on to general-purpose computing – it’s Graviton time.

Selipsky looks back to the initial launch in 2018, before Graviton2 in 2020 and Graviton3 in 2022.

Now though, it’s time for an upgrade, namely AWS Graviton4 – the most powerful and energy-efficient chip the company has ever built, Selipsky says.

The chips are 30% faster than Graviton3, 40% faster for database applications, and 45% faster for large Java applications.

All this adds up to a full suite designed for helping your business, Selipsky says.

Here we go – it’s AI time.

“Generative AI is going to reinvent eery application we interact with at work or home,” Selipsky says, noting how AWS has been investing in AI for years, using it to generate tools such as Alexa.

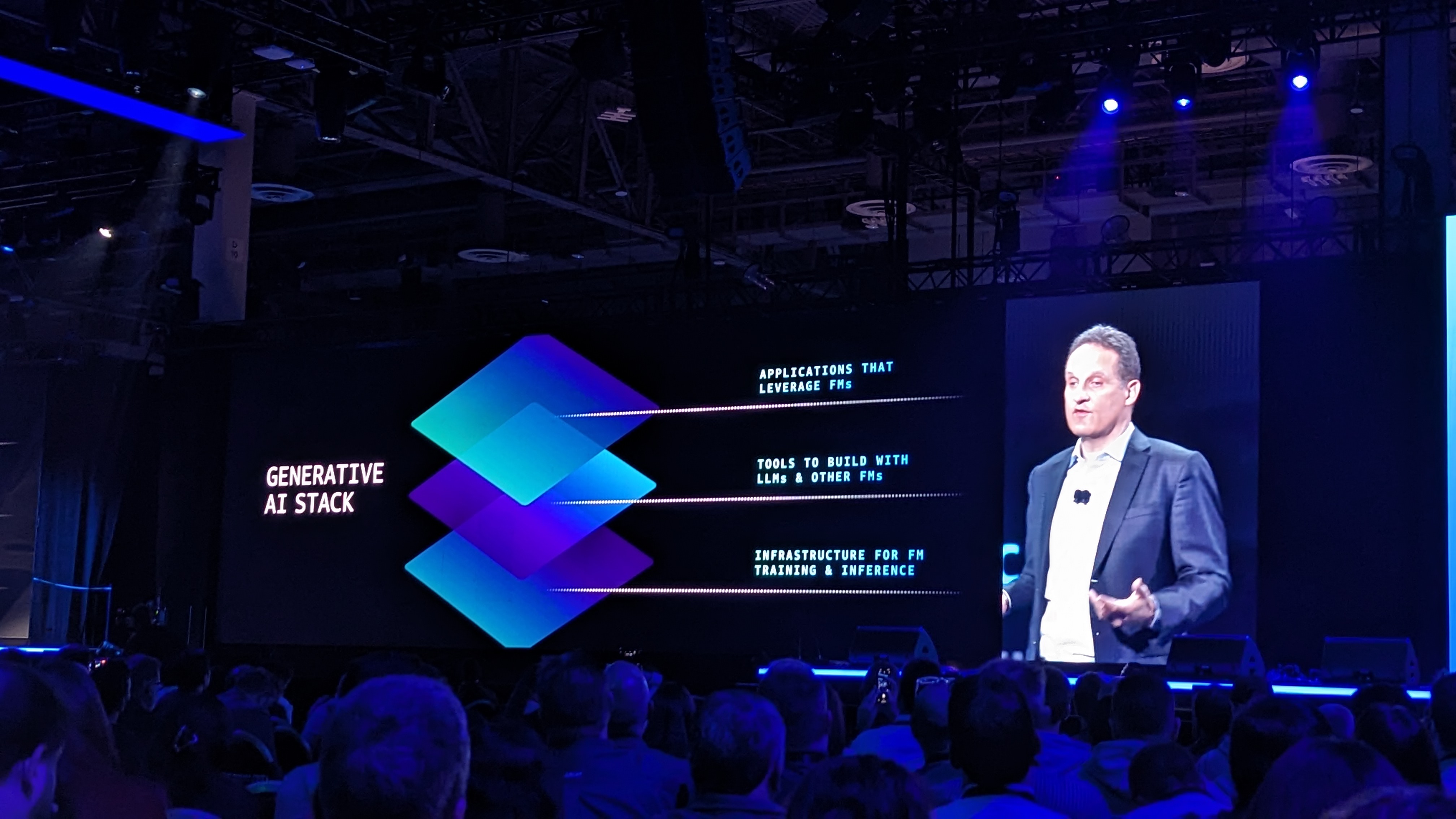

The Generative AI stack has three layers, he highlights, turning to infrastructure first. AI uses huge compute power, so getting the most out of your stack is vital.

Selipsky highlights AWS’ close work with Nvidia to help further the training and development of AI.

But you also need high-performance clusters alongside GPUs, with AWS providing incredibly advanced and flexible offerings that allows customers to scale up when needed.

All of this is built on AWS Nitro, which “reinvented virtualization”, allowing for efficiency and productivity gains all round, Selipsky says.

Selipsky reveals an expansion of AWS and Nvidia’s relationship, and introduces a special guest – Nvidia CEO Jensen Huang!

Huang announces a deployment of a whole new family of GPUs – including the new Nvidia H200 Grace Hopper superchips, offering a huge step forward in power and performance when it comes to LLMs and AI.

Nvidia is deploying 1 zettaflop of computing capacity per quarter – a staggering amount.

There’s a second big announcement – Nvidia DGX Cloud is coming to AWS as well.

This is Nvidia’s AI factory, Huang notes – “this is how we develop AI”.

DGX Cloud will be the largest AI factory Nvidia has ever built – including running Project Seba, 16,384 GPUs connected into one AI supercomputer – “it’s utterly incredible,” Huang notes – a stunning 65 exaflops of power.

With those two (frankly ridiculous) announcements, Huang departs to huge applause.

Selipsky now moves on to capacity – getting access to all this compute.

Customers often needs clustered capacity brought together, but don’t need all of it all the time – fluctuating demands call for short-term clustered capacity, but no main cloud provider provides this.

Luckily, the new Amazon EC2 Capacity Blocks for ML will now allow this, allowing customers to scale up hundreds of GPUs in a single offering, meaning they’ll have the capacity they need, when they need it.

Moving on to the silicon level, Selipsky focuses on EC2 Inf2 Instances, which now deliver higher throughput and lower latency than ever before.

AWS Trainium is seeing major uptake among companies looking to train generative AI models – but as models get bigger, the needs get greater.

To cope with this, Selipsky reveals AWS Trainium2, four times faster than the original product, making it better for training huge models with hundreds of billions of parameters.

Now it’s on to AWS SageMaker, which has played a huge role in AWS’s ML and AI work over the years, and now has tens of thousands of customers across the world, training models with billions of parameters. But there’s no update for this platform today…

Now, moving on from infrastructure to models. Selipsky notes that customers often have many pertinent questions about how best to implement and use AI, and AWS wants to help with its Bedrock platform.

Allowing users to build and scale generative AI applications with LLMs and other FMs, Bedrock also allows significant customization and security advantages. Over ten thousand customers are using Bedrock already, and Selipsky sees this only growing and expanding in the future.

“It’s still early days,” Selipsky notes, highlighting how different models often work better in different use cases – “the ability to adapt is the most important ability you can have.”

In order to help deal with this, AWS is looking to expand its offerings for customers – “you need a real choice”, Selipsky notes.

To do this, he emphasizes how Bedrock can provide access to a huge range of models – including Claude maker Anthropic.

He welcomes Anthropic CEO and co-founder Dario Amodei to the stage to talk more.

Amodei notes that AWS will be Anthropic’s main cloud provider for mission-critical workloads, allowing it to train more advanced versions of Claude for the future.

After a glowing review from Amodei, he heads off, and Selipsky turns to Amazon Titan Models – the AI models Amazon is creating itself, with 25 years of experience with AI and ML.

These models can be used for a variety of use cases, such as text-based tasks, copy editing and writing, and search and personalization tools.

More Titan models will be coming soon – but more on that in tomorrow’s keynote, apparently…

Using your own data to create a model that’s customized to your business is vital, Selipsky notes, with Amazon Bedrock and new Fine tuning tools allowing just that, meaning the model learns from you and your data to help create tailored responses.

There’s also a new Retrieval Augmented Generation (RAG) with Knowledge Bases release on offer, allowing even further customization.

Now, how do you actually use FMs to get stuff done? Selipsky says this can often be a complex process, but fortunately there’s the new Agents for AWS Bedrock tool, which allows multi-step tasks across company systems and data sources, allowing models to be more customized and optimized for specific use cases.